Jane Goodall Institute Hackathon

The Challenge

The challenge of the hackathon was to build a system to allow JGI to be able to automatically geo-locate photos taken by their camera traps. The camera traps record the ID of the trap and a timestamp on each photo but do not have GPS, so it was a slow manual process to locate where each image was taken.

Team

I decided to enter on my own and did the design, front-end and back-end. I did enlist the help of two friends to create the video demo as I was running low on time towards the deadline.

What I Made

Field workers already record the status of the camera trap using Survey123 on a mobile when they place and pick up the traps so I decided the best way would be to piggy-back on their current process to use the GPS on their phones along with the timestamps to match the photos to the location.

I built an app using RedwoodJS that allows the office manager to have visibility into the status of each of their camera traps, audit their event history and to take the photos from being downloaded through to being approved and released.

I also built an updated Survey123 form for the Field Workers to use and QR-Code generator to streamline the process of placing the traps and prefilling a lot of the details on the form.

The app uses the MediaValet api behind the scenes to fetch the images and to write the location data back.

I’ll do another post covering this project from a more technical angle and my experience with RedwoodJS, but the short story I loved it! It’s like the good bits from Rails in a modern JS dev environment and even though I’d never used it before I was able to work at really good pace and never felt it got in my way.

The Process

1. Setting Up Integrations

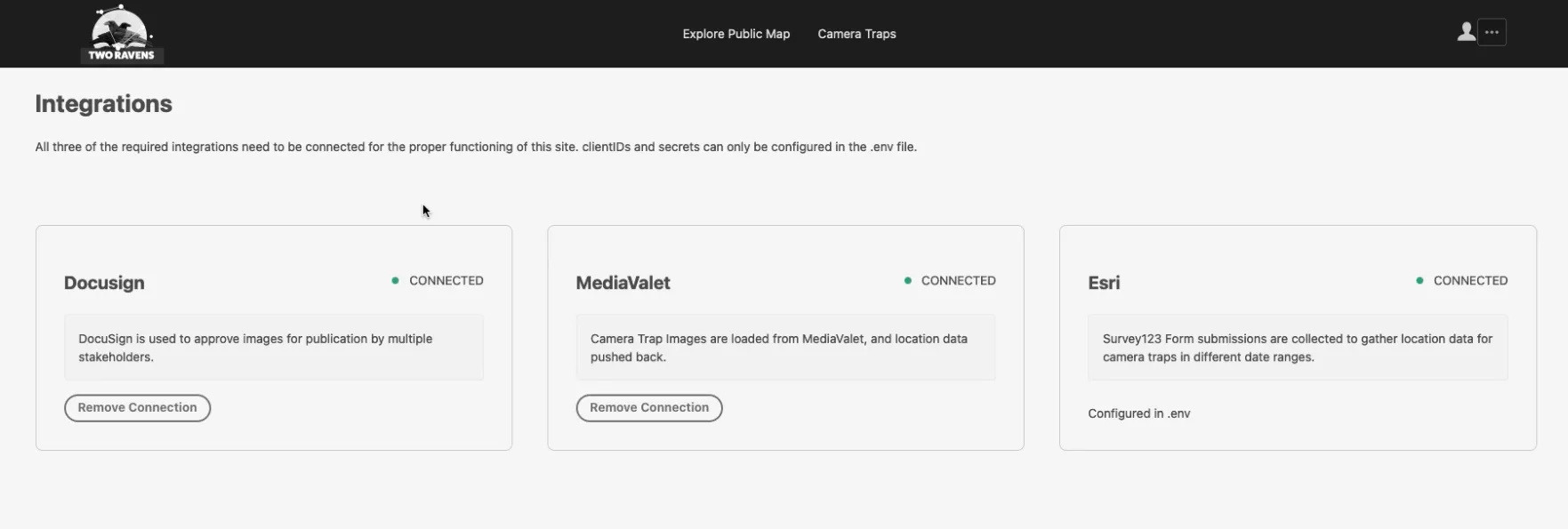

The first step after creating your account is to setup the API Integrations with MediaValet (The service JGI use to manage their images) and DocuSign (used for stakeholder approval documents).

Setting up the integrations with MediaValet, Docusign and Esri

2. Setting Up Your Camera Traps

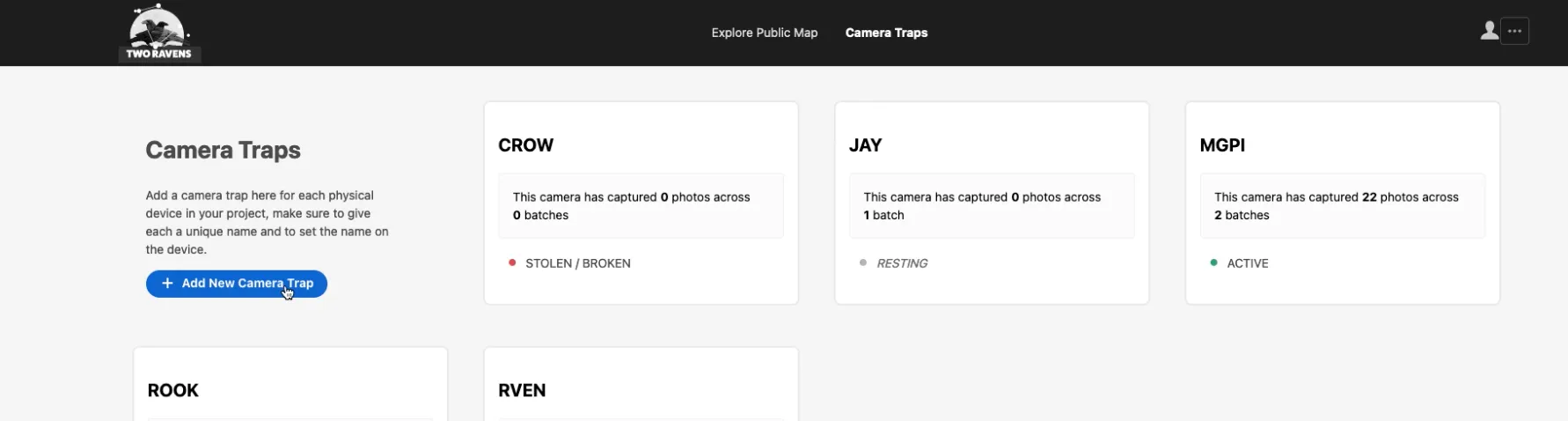

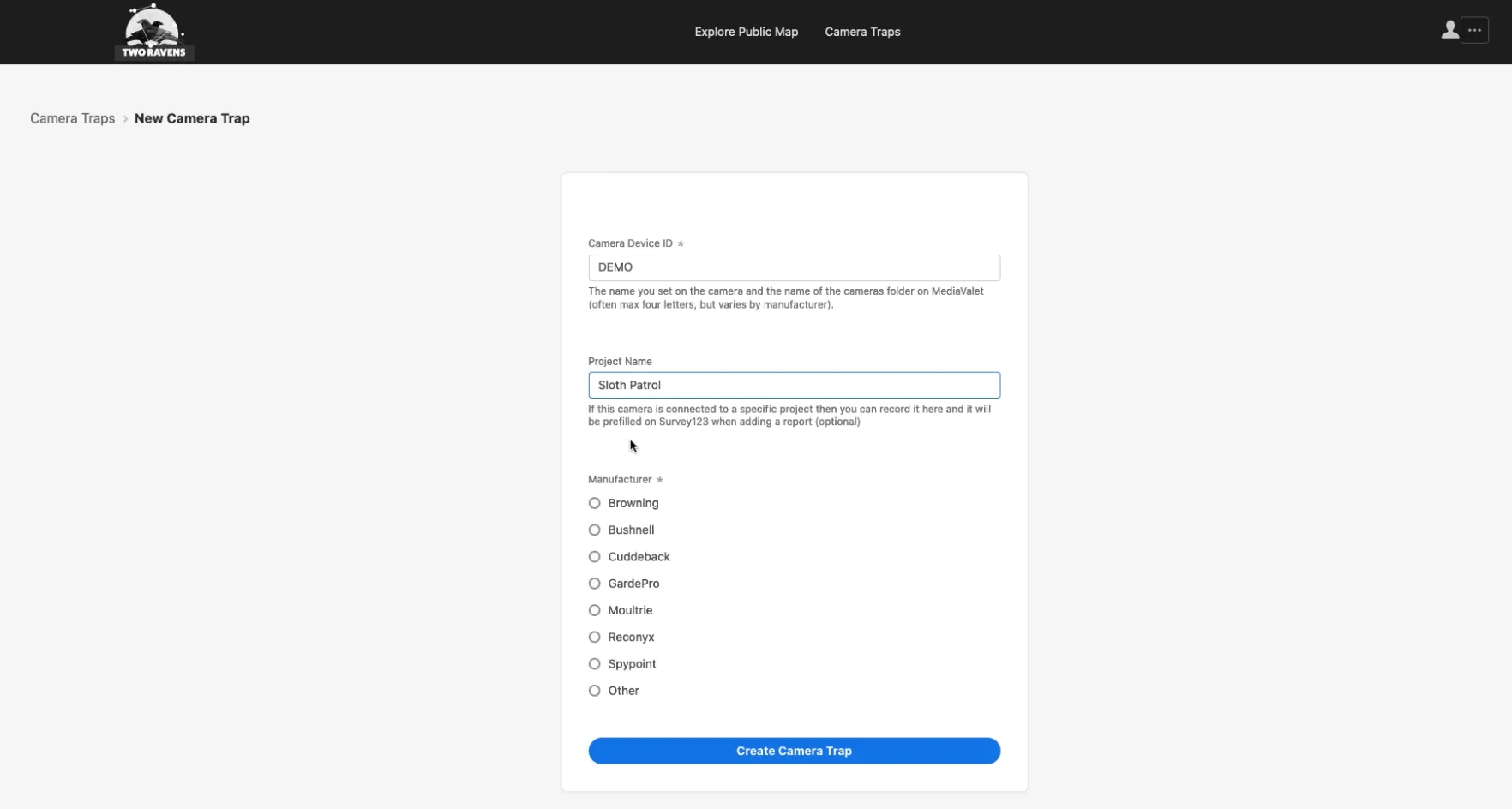

All your available camera traps can be added to the app and have the manufacturer and project they are connected to recorded. An upload folder for the camera trap is created on MediaValet for the images to be uploaded to when a camera trap comes back in from the field.

Viewing the camera traps on your account

When a camera trap is added to the app a QR-Code is generated to be printed on a sticker and stuck inside the camera trap. This QR-Code opens up a Survey123 form on the field workers phone to make recording placement error free. This form works without a network connection and can be submitted when they are back in the office.

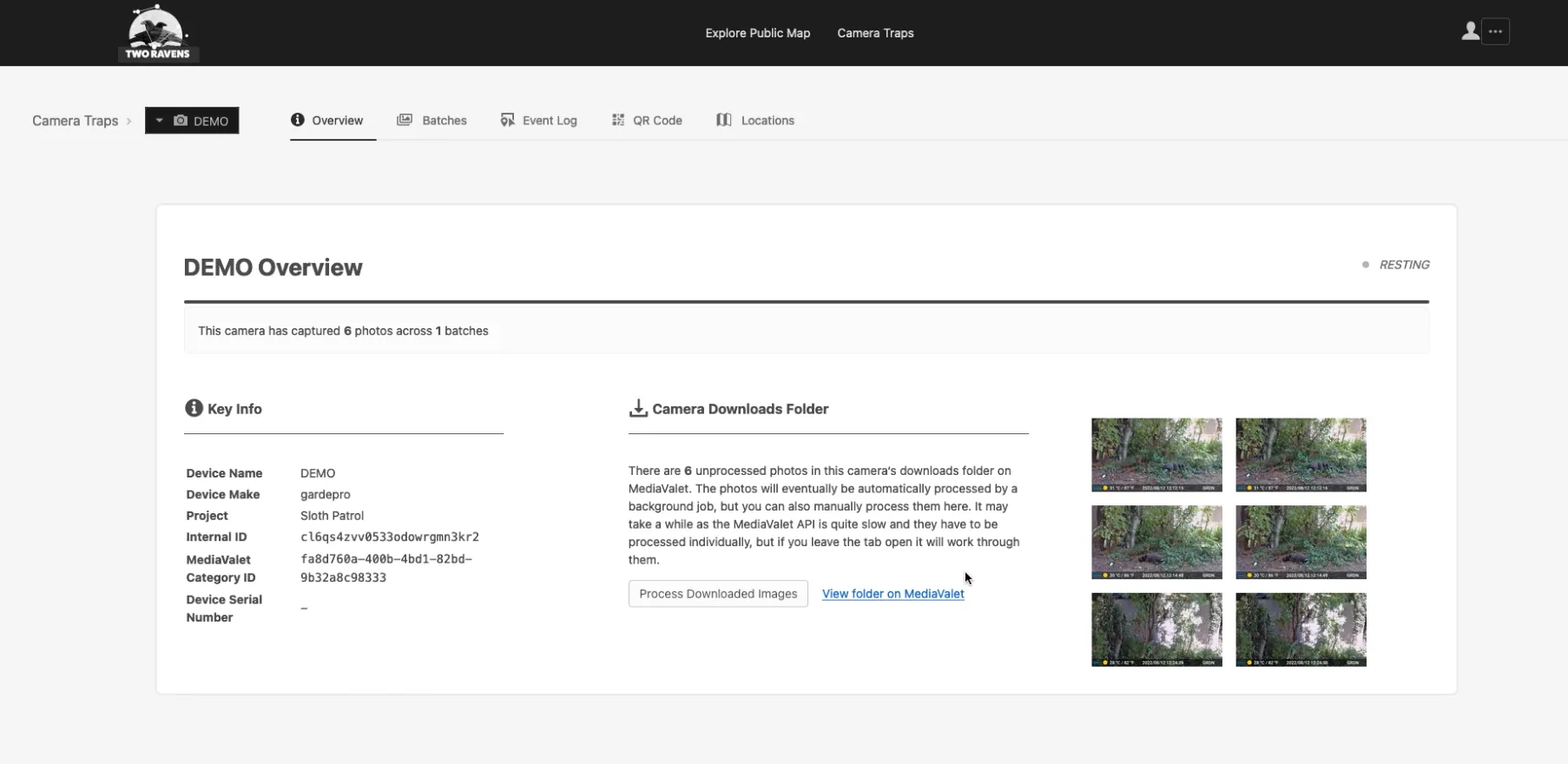

Viewing details about a specific camera trap

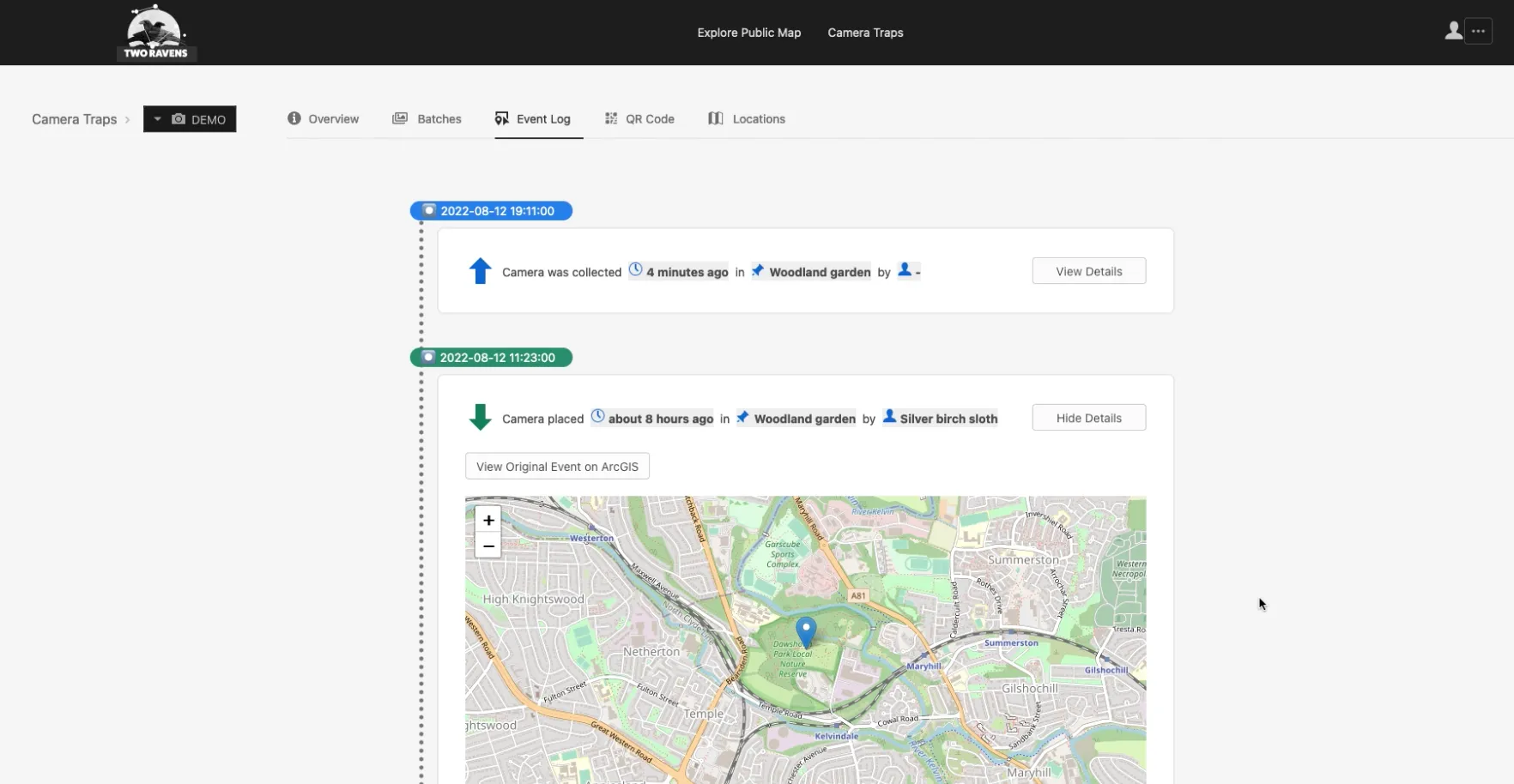

All events related to camera trap placement and collection are recorded in the event log along with a record of any images downloaded. The status of the camera is also updated from Survey123 and reflected in the app.

Viewing the events logged for a camera trap

3. Processing the images

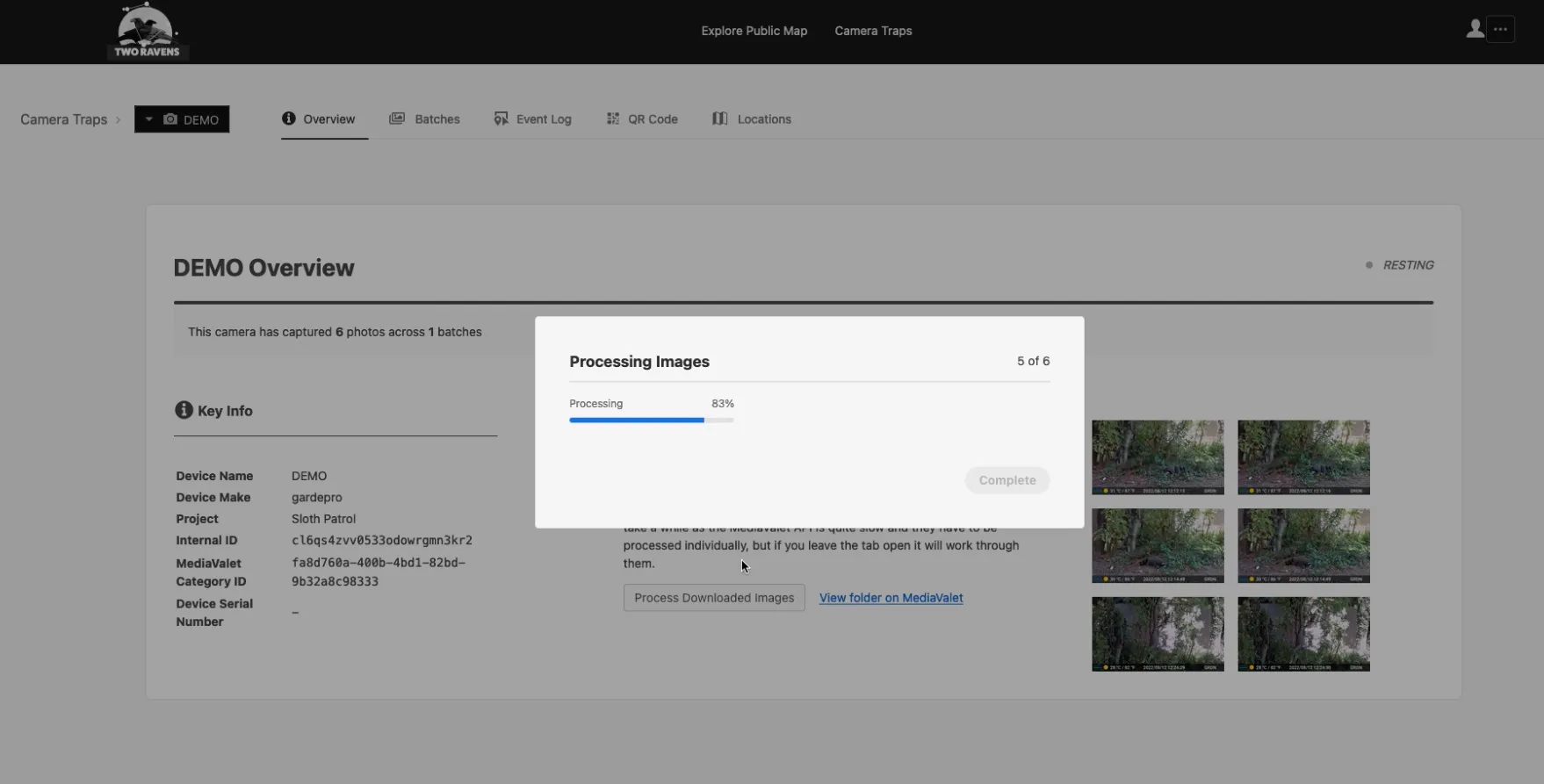

After the camera trap comes back and the photos have been uploaded to MediaValet they are ready to be processed. This can be kicked off manually or triggered by a CRON job.

The images have their timestamp embedded and these are cross-referenced with the timestamps of the camera trap placement and collection, and the geolocation of the camera trap to organise the photos into batches.

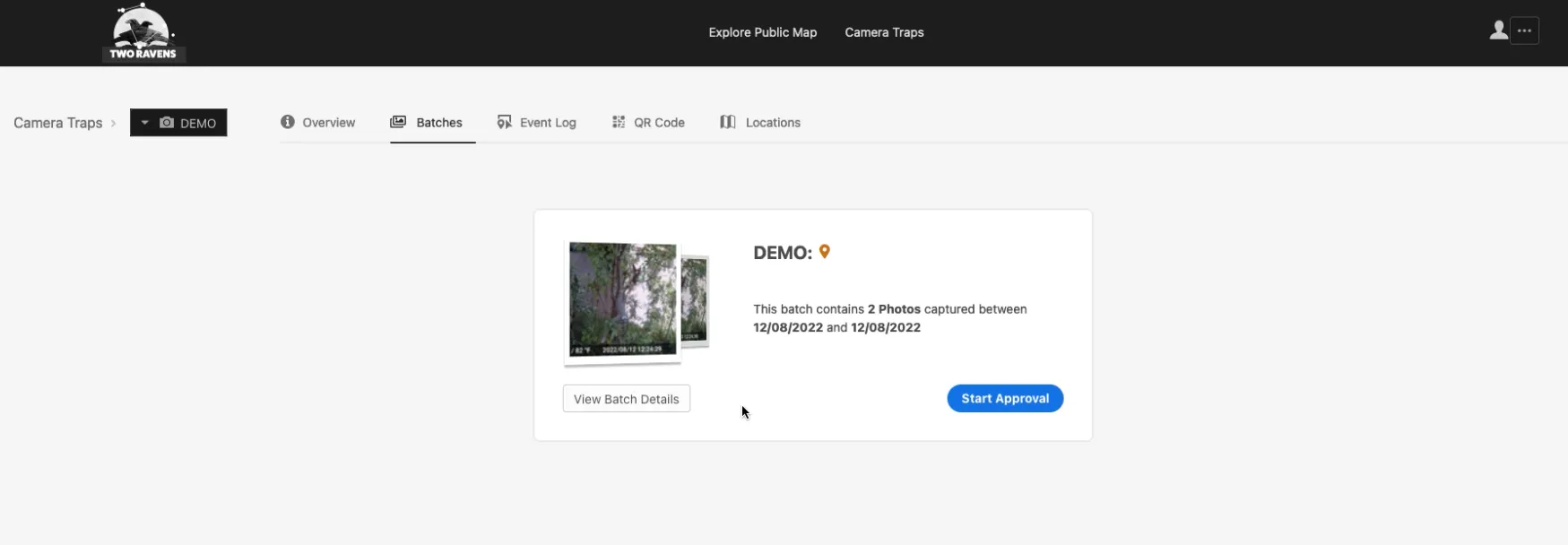

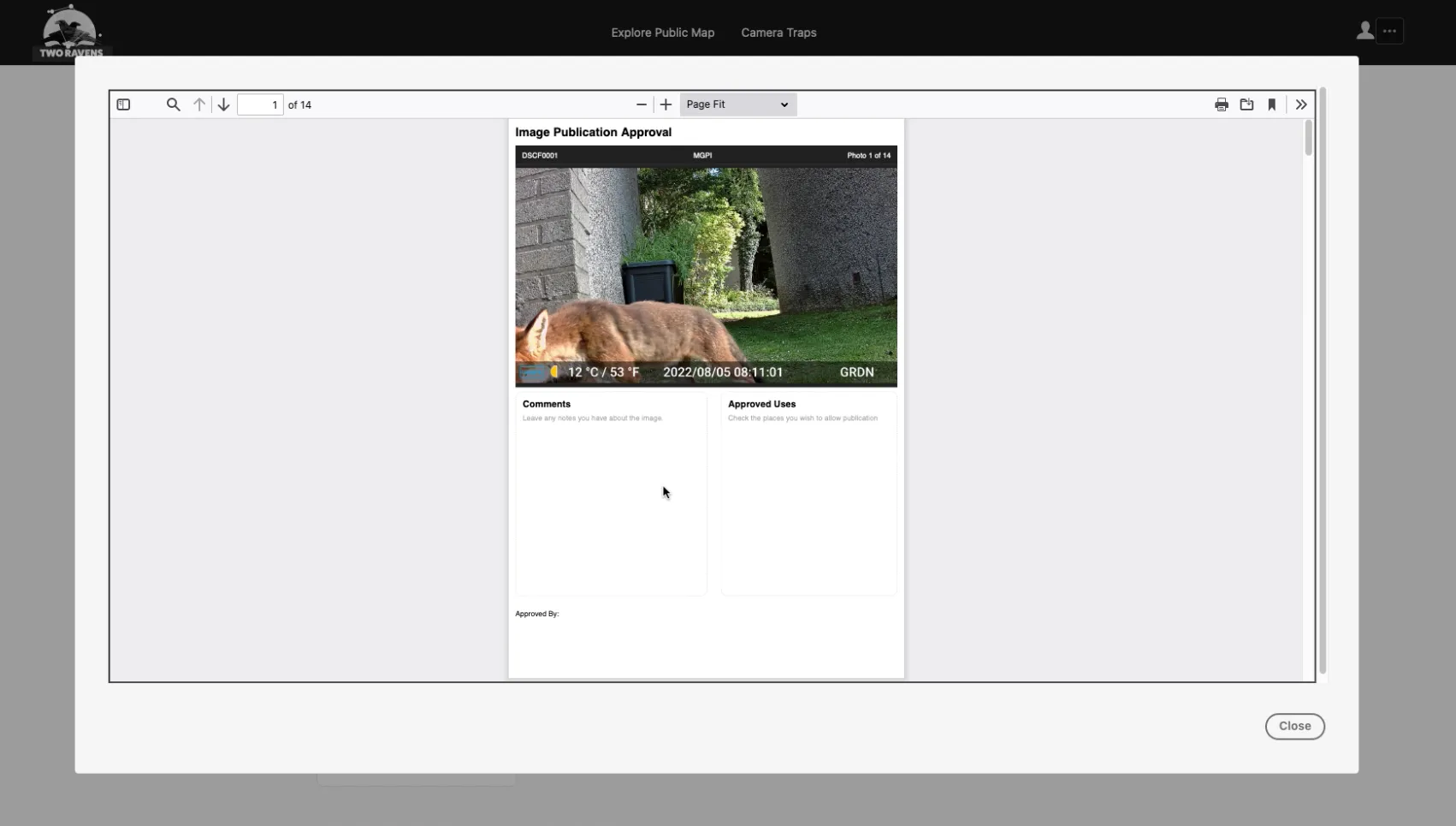

4. Generating a stakeholder approval document

A PDF for the batch is generated with a page for each photo and using DocuSign is sent to all of the stakeholders who sign off each image.

Generating a stakeholder approval document from the image batch

Once each image in the batch has either been approved or withheld the batch can be released.

The Demo

A huge thank you to Chris and Nic for putting the video together for me while I was in a deadline panic, Chris was thrown in the deep end with video editing and I think they both did an amazing job ❤️

The Name

‘Two Ravens’ is named for the avian servants of the god Odin; Huginn (Thought) and Muninn (Memory). This was inspired by the challenge of the hackathon; to retrieve the Memory of geospatial data in existing fieldwork practice, and make it an easily accessible tool for scholarly Thought.

Completing this project was really emotional for me; Jane Goodall has been one of my biggest heroes and role-models since I was a newly vegetarian 7 year old, and having the opportunity to possibly help her work was huge. I missed the first two weeks of the hackathon due to covid and had quite a few late nights to catch up. I was an exhausted mess by the end but I’m really glad I took part.

You can learn more about the Jane Goodall Institute and the amazing work they do at https://janegoodall.org/ 🔗

If you have any comments, suggestions or feedback about this post please do send them to me. Mention the title of the post or include a link so I know what you're talking in relation to.